5 Tips To Get Top SEO Results

Share

Every marketing professional knows that being at the top of search engine results is important and that SEO will help to get them there. You may have also heard that SEO can be challenging to learn, but the truth is, SEO doesn't have to be difficult, and sometimes it can be pretty fun!

These 5 basic SEO techniques are easy to implement and will have you on your way to the top of search engine results:

- Help the search engine bots understand your site

- Eliminate any technical issues harming your search ranking

- Match your content to your audience’s search intent

- Write quality content

- Optimize the SEO of key pages on your site

1. Help the search engine bots understand your site

A well-built sitemap.xml and robots.txt file are the initial building blocks of SEO success. These two files tell Googlebot what to crawl and what not to crawl on your website.

Use a sitemap to help the robots understand your site structure

Make sure you have a sitemap and make sure that the sitemap has been submitted to the Google Search Console. A sitemap is an XML page listing all of the "included" sitemap URLs as well as their priority, last changed date, and change frequency. This sitemap will allow Google to easily crawl and index all of the pages on your website that you want to appear in Google search results. You're essentially providing Google with a cheat sheet of your website. Googlebot doesn't require a sitemap to crawl your website, but it does make it easier and more efficient to provide it with one.

Maybe you already have a sitemap in place. Navigate to yoururl.com/sitemap.xml to verify. Confirm it’s up to date. If you have a CMS like Drupal or WordPress, likely a plugin is doing this for you. It’s also possible a sitemap was manually generated by someone at your organization that could be out of date. You can generate a new one at www.xml-sitemaps.com if need be.

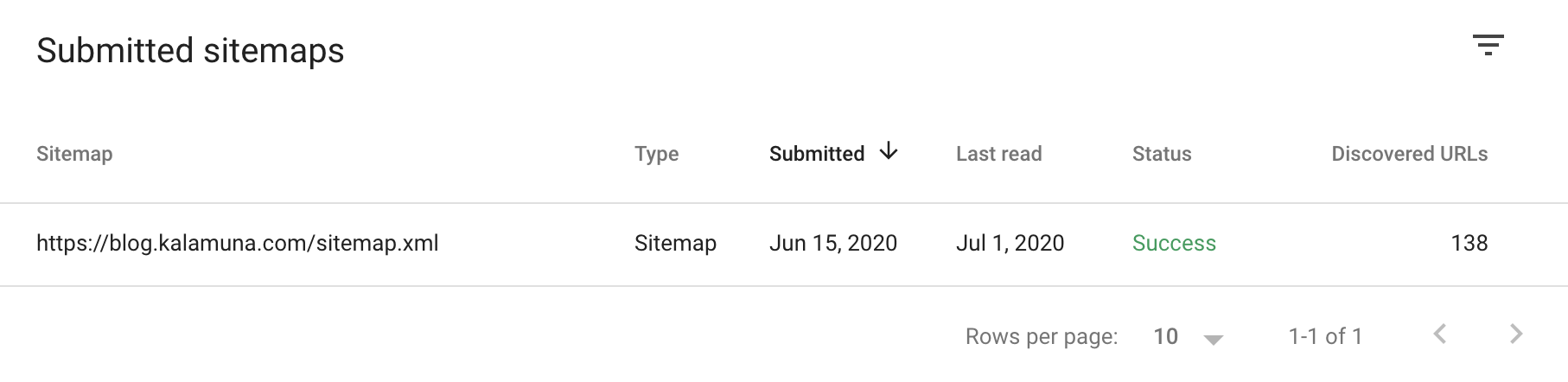

Once you have verified that your sitemap exists and that it contains the right pages, navigate to the Google Search Console.

If you have not signed up for an account with Google Search Console, do so now.

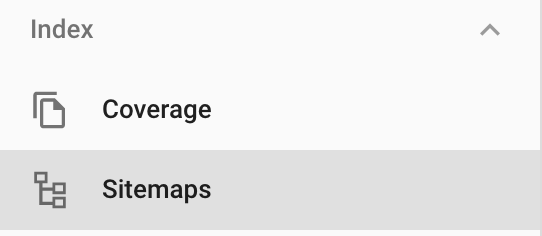

Once inside the Search Console click Sitemaps from the sidebar navigation menu.

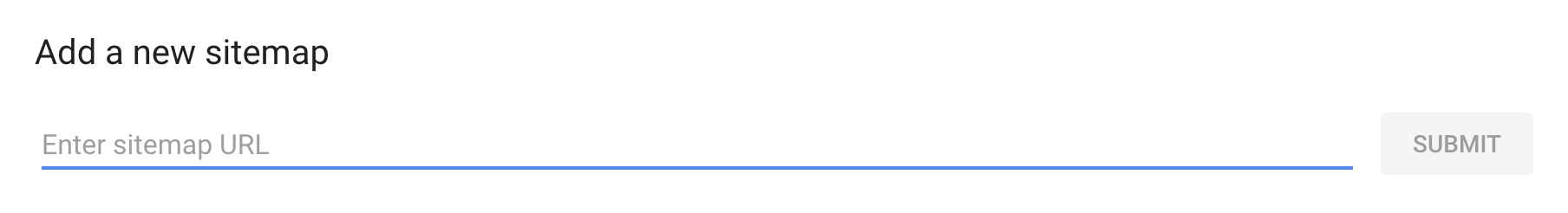

Enter your sitemap location into the add new sitemap URL bar and click submit.

If Google can find your sitemap then you should see an entry below that looks like this:

The search console will provide you with great feedback regarding the general health and performance of your website as well. Subscribe to our newsletter to get notified when we publish a deeper-dive article on this topic.

Exclude pages from Google using robots.txt

While your sitemap is designed to help Googlebot know what it should be crawling, your robots.txt file, on the other hand, tells Google what to ignore.

There may be portions of your site that you never intended a visitor to see, and others you’d prefer aren’t googleable.

Common pages to include in a robots.txt file are:

- Login page (If you don't allow visitors to log in)

- Administration pages

- Intentional duplicate pages (Like print-friendly pages)

- Thank you pages

- Search result pages

- Comments

- Tag pages

There are a lot of additional pages and reasons to add pages to your robots.txt file so this will require some forethought.

So where is your robots.txt file? Typically it can be found in the root directory of your webserver. This is where all your website files are stored. It should look something like this:

User-agent: * Disallow: /admin/ Disallow: /comment/reply/ Disallow: /search*

The first line of this file, the User-agent: * line, says this is for ALL bots! This includes Googlebot, Googlebot image, Bingbot, Slurp (Yahoo), etc.

The following lines of this file simply say, "YOU ARE NOT ALLOWED TO GO HERE, HERE, AND HERE!"

Be careful what gets added to this file. Like we said before if it's disallowed in this file the bots will not crawl that page.

If your robots.txt file contains something like the following:

User-agent: * Disallow: *

Nothing on your site will be crawled. There will be no crawl, no index, no search result listings.

So be very careful that anything you include in this file is intentional. Otherwise, you may be hiding important pages from Google.

2. Eliminate any technical issues harming your search ranking

The next step in SEO basic health is to ensure that your website is free of any technical issues that may be preventing your site from being crawled.

Some common examples of technical SEO issues are, but are not limited to:

- No Sitemap (detailed in the previous section)

- Missing or incorrect robots.txt file (detailed in the previous section)

- Slow page speeds

- Duplicate pages/content

- Missing alt tags

- Broken links

- Non-mobile-friendliness

- Missing meta descriptions

- Missing title tags

- 404 pages

Some of these issues can be solved simply. If you are using a CMS like Drupal or WordPress, most offer plugins to edit or add meta descriptions and title tags directly through the page editor.

Duplicate pages and content can be compiled or deleted. Broken links can be corrected. And, 404 pages can either be redirected or added back in if they were accidentally deleted.

If you are not a technical person, other issues like site speed may require the involvement of your website developer to fix. Many of these errors will be reported to you within Google Search Console.

If you are looking for even deeper insights into the "crawlability" and overall SEO health of your website tools like Ahrefs and Moz can offer in-depth insights into your SEO efforts.

We will be diving deeper into how to solve these common technical SEO issues in future articles.

Once your website or page is free and clear of technical SEO issues, it's time to move on to the next step.

3. Match your content to your audience’s search intent

Every marketer wants their organization’s website to be at the top of their audience’s search results. To strategically achieve this, we recommend researching keywords, which can be a tricky endeavor. Keywords are the words and phrases in your content that make it possible for searchers to find your website when using search engines.

While ranking for high-level keywords with large search volumes can be great, they often give you little in the way of your audience's actual intent.

Let's look at 2 examples:

Someone searches the following:

“Universities”

If you are an institution of higher education it’s likely that you want to rank for the “Universities” keyword. However, ranking for this particular keyword gives you very little information in regards to the person searching Google’s intent.

Search intent is the ultimate goal of the person using a search engine. Their intent in this context may be to find answers, compare services, or purchase products.

What page on my site should rank for this keyword? What does the person searching Google plan to do with the information they find? Are they in the research phase or are they considering applying to a school?

It becomes very difficult for you as the site owner to determine what to do with people who come to your site via keyword searches such as this.

Let’s take a look at another example. A person searches Google for the following:

“Computer science universities near me with an accelerated program”

While the search volume (amount of searches performed each month) for this particular keyword may be significantly lower than the previous, the phrasing is very actionable.

Based on this search we know several things about the person:

- They are looking for local universities

- They are interested in computer science

- They are in need of an accelerated program

Search phrases like these, known as long-tail keywords, make it very easy to provide users searching Google with content that satisfies their needs.

If you offer such a program, we recommend creating a dedicated page about your adult-oriented accelerated computer science degree for users searching for this term to find exactly what they’re after.

If you don’t have an accelerated program, we recommend creating a page optimized (see number 5 below) for the same phrase that stresses the benefits of a four-year degree.

Long-tail keywords like these offer us greater insight into individuals' search intent. And, based on their intent we can determine what actions a user is likely to take in response to the information you present them, which in turn informs how you write this content.

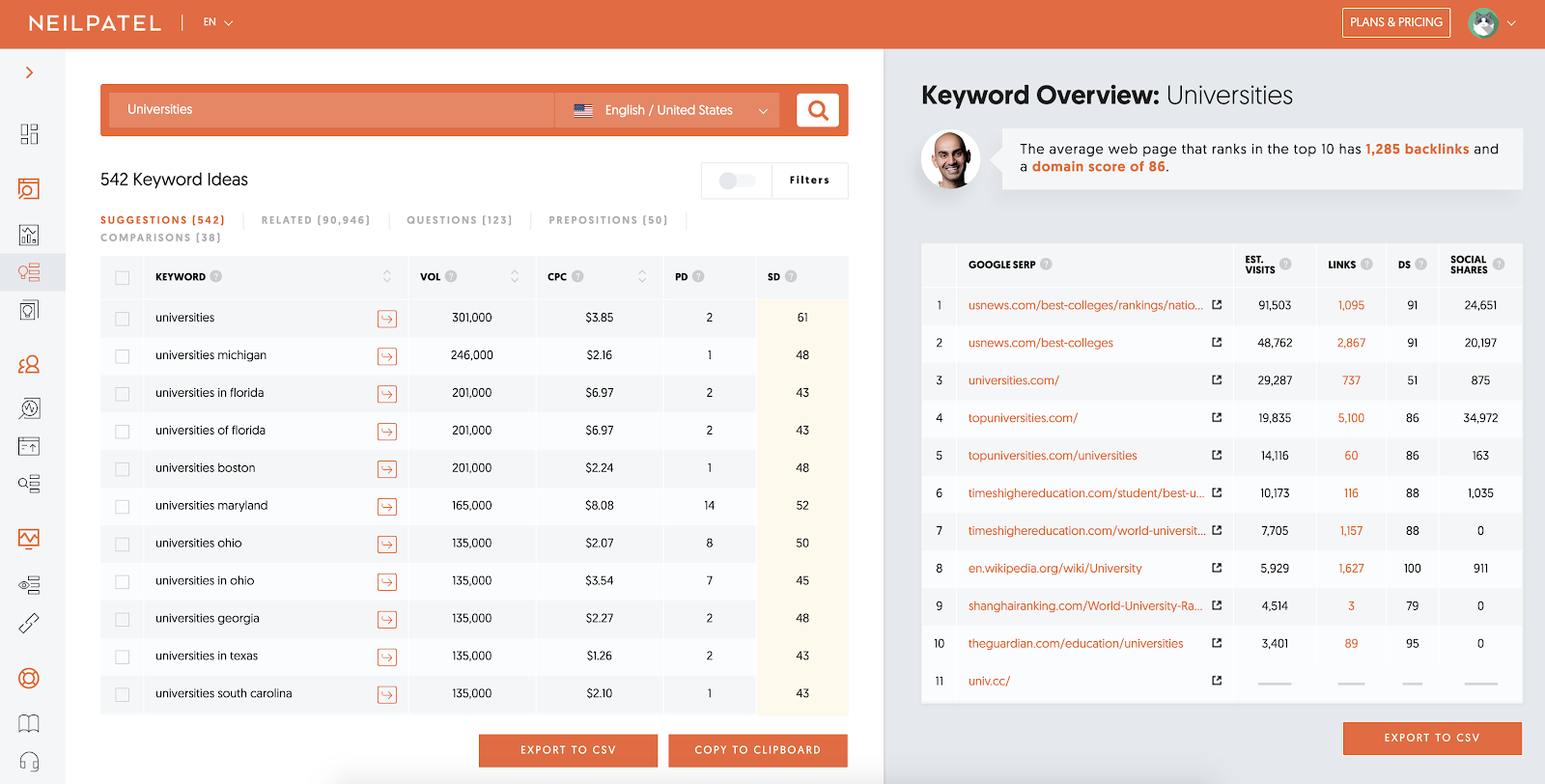

Example Keyword Research for the term “Universities”

There are many great tools available to perform what is known as keyword research. There are paid tools like Ahrefs and Moz Pro as well as free tools such as Google Ads Keyword Planner. For this article, we will be utilizing Ubersuggest, a free tool offered by Neil Patel.

We can see that the search volume for the keyword “Universities” is quite high and marketplace demand for it is fairly high. Running ads against this keyword would cost us more than $3 per click to on our website.

We also see the sites that we would be competing against to rank for this particular keyword. The tool also offers us some other helpful keyword suggestions as well as the volume and SEO difficulty of each.

The top tab also gives us related keywords, questions, prepositions, and comparisons that oftentimes have a lower SEO difficulty, offering us alternative keywords and phrases that are being searched that may be easier to rank for.

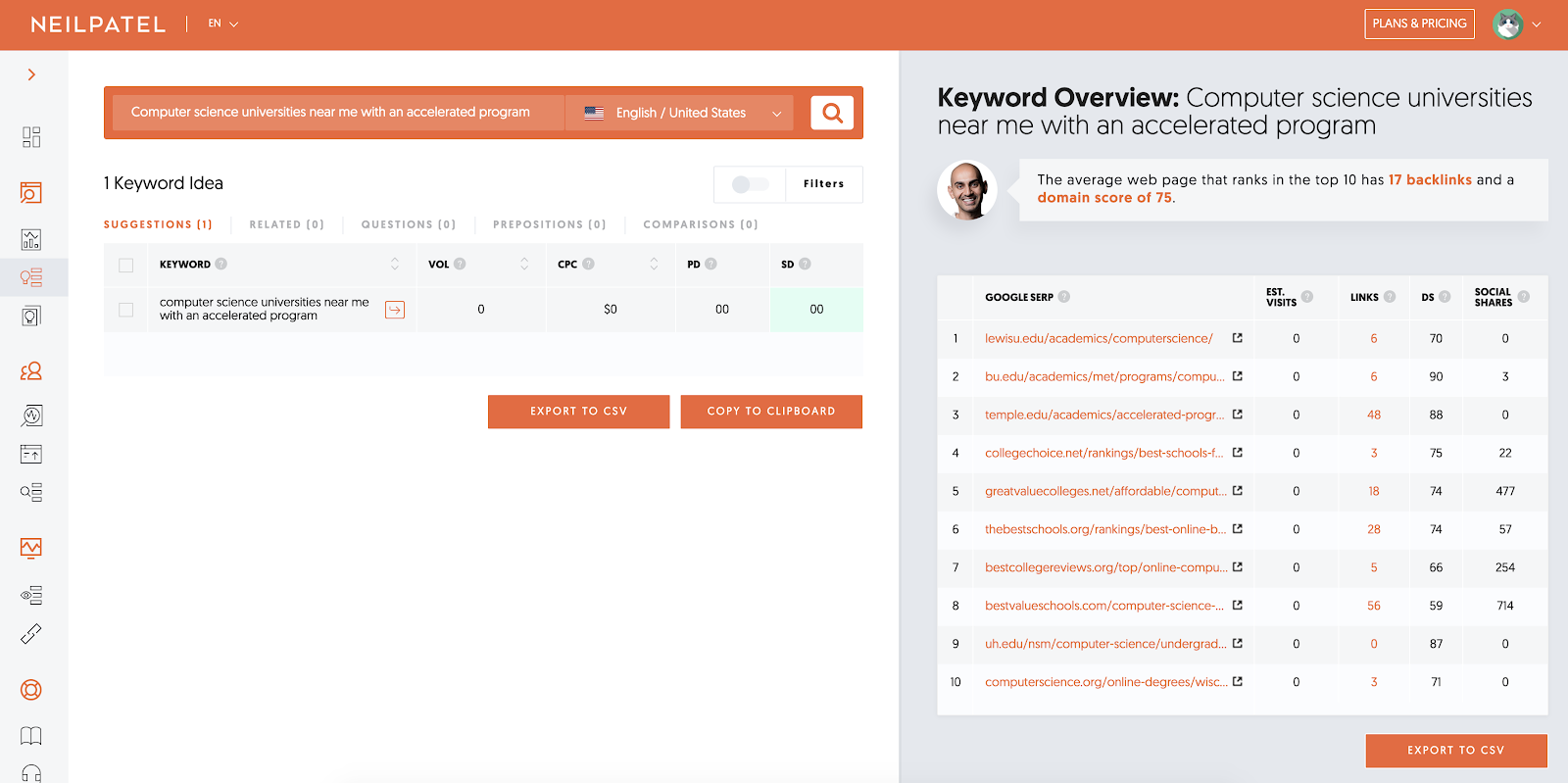

Now let’s look at our long-tail keyword.

One very important detail to note here is that a 0 in the search volume does not necessarily mean this keyword does not receive any searches per month. It simply means that the search volume is so low, the amount of times this phrase is searched per month, that it can’t really be measured accurately.

However, notice the SEO difficulty. There is almost no competition when attempting to rank for this keyword. Taking a look at the sites who do rank for this keyword we can see that one even has a page with over 700 social shares. This means that while this long-tail keyword is not searched often, the content that is found is very valuable to those who have searched it.

This high-level overview in keyword research demonstrates that the best course of action is one that involves both long-tail and short-tail keywords. While short-tail has the most reach, long-tail has more potential for action.

4. Write quality content

Google, as it typically does, has made changes to its search algorithms which determine the order of search results:

- Time on page

- Bounce rate

Time on page is exactly what you might think – it is the amount of time a visitor spends on your webpage. Bounce rate is the percentage of visitors who come to your website, visit one single page, and leave. Google is equating the time a visitor spends on a given page with the overall value that page has to offer and thus, they will place that page higher in search results.

This means that in order to rank more effectively we recommend doing a couple of things:

- Write complete content

- Write valuable content

Complete content refers to the idea of leaving nothing behind. If utilizing our intent-based keyword research, we know what searchers are searching for, why would we only provide them with half a solution?

Valuable content refers to content that is useful for people. Unique, fresh, and complete content offer the most value.

Complete content does not mean that you have to give them so much that your products and services are no longer necessary, but if we know they are searching for computer science degrees with an accelerated program don’t leave out crucial information that would assist users in understanding that your program is. Complete content simply satisfies the needs and intents of the searcher.

5. Optimize the SEO of key pages on your site

On-page SEO refers to the process of optimizing a single page in order to rank higher and increase relevant traffic. Any on-page SEO effort will be a losing battle unless you are first delivering quality content (see step 4). But, once that’s complete the only place left to go is up (in your rankings).

Keyword Usage for Humans

In the past, many attempted to improve their search result rankings by engaging in a practice called keyword stuffing. They hid keywords somewhere on the page, not visible to site visitors, repeating hundreds of times to rank higher. These practices not only no longer work, and can actually have severe negative effects on your SEO efforts.

Still, if you want to rank for a particular keyword, it is important that you do use your keyword and use your keyword correctly. So where should you use your keyword to get the most out of your efforts?

- In the first 100-150 words of your page

- In an H1 (heading tag or title) on your page

- In your subheading tags (H2, H3, etc)

- In the URL for the page

- In your meta description (this may or may not be used by search engines but it’s important to fill this in)

- In image alt and title tags

- And wherever it logically makes sense to use it in content

Google stresses that we write content for humans and not robots. Neil Patel has written a great article on the topic of writing for people while optimizing for robots. Forcing the use of keywords where they do not make sense will backfire and may actually harm your SEO.

Link to other sites

While it may seem counterproductive to link to another website, or outbound linking, when trying to optimize your own, the opposite is actually true. Linking to credible sources in your article is a great way to show the accuracy of your content in a sea of misinformation. For example, we recommend this great article by Winnie Wong for SEO Pressor describing how outbound linking increases relevance, improves reputation, boosts value, and encourages backlinks.

Note: If feasible, reach out to anyone you’ve quoted in your article and have linked out to with a note of appreciation and link to your article. If they decide to share your content, you could find yourself with a big SEO win!

Link to other pages on your site

Internal links connect your page to other pages in your domain and are beneficial in a couple of different ways.

First, they lower the potential bounce rate of a site visitor, by offering more content for them to browse.

Second, linking from pages with a high SEO score to those with a lower one can rank them up.

To successfully link to a page, create a keyword-rich link. Do not simply paste the URL to the other page, you will be wasting a valuable SEO opportunity.

Ex: If you’re remotely interested and want to learn more, Lily wrote a post on how to Throw an Epic Remote Office Party

Encourage Content Sharing

When someone shares a page on your website, it’s like they are giving your page an upvote.

The more votes that a page has, the higher it can rank.

Adding social share buttons to your web pages can go a long way in the shareability of your website. Also, make sure your metadata is properly set up so that when people share your content, it appears in an attractive way on social channels.

Conclusion

This is a 5,000-foot view of SEO and is designed to get you started on the journey of optimizing your website and ranking better in search engines.

In subsequent articles we will do a deep dive on each of these topics and more, however, for now, these tips will go a long way in increasing your search visibility. We encourage you to put them into practice and obtain results!

Want to dive deeper? Drop us a line.